Execution-level AI narratives

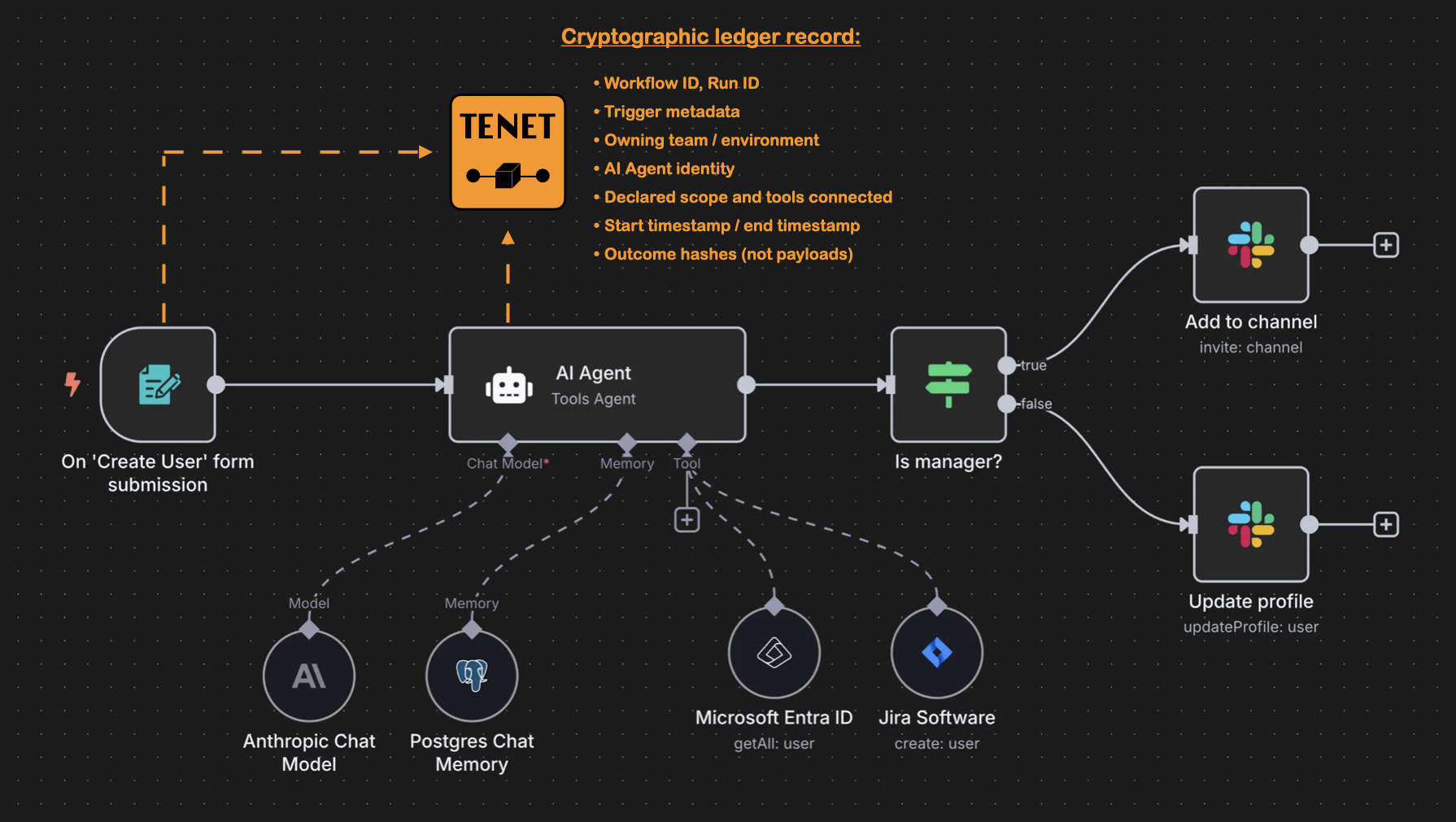

Each run gets a concise plain-language explanation of what happened, linked to the underlying evidence context for verification.

Local AI insights + independent crypto-digest anchoring = clear business context with tamper-evident integrity proof.

What is TenetSafe

TenetSafe is a local-first workflow intelligence and evidence integrity platform for n8n.

It gives teams day-to-day visibility into automations and independent cryptographic proof for audits, disputes, and incident reviews.

Rich execution evidence is captured, analysed, and kept inside your environment, while only hash-based crypto-digests are stored externally to verify evidence integrity on demand.

Four simple steps to operational clarity and defensible evidence.

Step 01

Collect local logs

Capture rich n8n execution evidence inside your environment.

Step 02

Generate local insights

Surface narratives, statistics, and risk signals for teams and leadership.

Step 03

Create no-PII digests

Compute reproducible deterministic hashes from local evidence artifacts.

Step 04

Anchor independently

Store no-PII digests externally to verify evidence integrity during disputes and audits.

TenetSafe supports governance and auditability; it does not by itself certify legal compliance or workflow correctness.

Add it like any other node. Collect and analyse data locally without exposing raw payloads.

n8n has seen rapid growth in popularity—especially in 2025 and 2026—reflecting its shift toward AI-native automation. In late 2025, it reportedly surpassed 230,000 active users and reached a valuation around $2.5B, signaling strong momentum for agentic enterprise workflow automation. Teams can build agentic workflows without losing visibility into what’s happening at each step.

We at TenetSafe decided to support n8n because it aligns with our values: transparency over black-box orchestration, maintainability over brittle automation, and flexibility over lock-in. Its emphasis on a "fair-code" approach and clear workflow structure makes it easier to attach evidence to the right moments in a run, and to reconstruct decisions during review.

TenetSafe is a natural fit for n8n because it:

Request live demo and see TenetSafe in action.

You already have logs and dashboards. TenetSafe adds a clear, shared explanation of what your AI workflows did, why they failed, and where risk is building.

Today’s reality

Raw data, no narrative

With TenetSafe

Local AI turns evidence into insight

When an AI system makes a mistake, logs are not enough.

Logs are not evidence.

A tamper-evident, independent record of declared intent, execution outcomes, and human oversight.

TenetSafe acts as an independent witness to every AI workflow execution - binding human authority to system actions in a verifiable evidence chain.

TenetSafe is built for organizations where "trust me" isn't enough — and where clear evidence can unblock enterprise deals.

Automating procurement, QA, or logistics? When physical or high-value systems are touched by AI, you'll need proof of what happened, and why.

Building AI features for regulated clients? Prove that your AI agents separate data and decisions correctly, following declared intent and scope.

Moving from PoC to Pilot? Your safety and compliance teams won't approve "black box" agents. Give them the evidence layer they require.

Best fit for: Automation Leads, Compliance Officers & AI Architects enabling secure AI adoption.

Add tamper-evident architecture to your workflows.

Product Evolution

v097

Cryptographically verifiable records of intent, execution, and oversight to improve defensibility.

v098

Raw evidence stays inside customer infrastructure while the Hub stores only signed digest anchors.

v099

TenetSafe now turns trusted evidence into plain-language workflow insight, diagnostics, and early risk signals.

Built on trusted local evidence, TenetSafe generates business-readable insight for daily operations while preserving your compliance posture.

Each run gets a concise plain-language explanation of what happened, linked to the underlying evidence context for verification.

Failed executions include probable cause, likely failure location, and recommended next checks to shorten triage time.

TenetSafe flags likely privacy and governance hotspots as operational signals so teams can prioritize investigation early.

Summaries combine outcomes, narratives, and risk trends so non-technical stakeholders can monitor automation health quickly.

AI-generated insight is assistive and trace-linked to evidence records; it does not certify legal compliance or workflow correctness.

Move from raw logs to business-readable execution understanding. TenetSafe links local evidence, AI-generated insights, and independent digest verification in one operator-ready timeline.

Workflow: Customer Complaint Classification & Automation

Outcome: AI Action Approved

Outcome: Email Sent

Request live demo and see TenetSafe in action.

High-risk EU AI rules are now expected to bite from 2027/28 — giving you time to build a serious governance backbone instead of scrambling later. TenetSafe provides the reconstructability infrastructure that supports Article 12 (Record-Keeping), Article 14 (Human Oversight), and broader trustworthy-AI expectations from boards, regulators, and customers. Compliance becomes a by-product of running operationally visible automation with evidence, not a blocker to deploying useful automation today.

We are building the foundation for trustworthy, regulated AI—so organizations can experiment, deploy, and scale automations with confidence. Read our full Vision & Mission.

Ready to improve workflow clarity and prove integrity at the same time?